As we all know, there are 10 kinds of people in the world.

For those of you who haven’t read Zen and the Art of Motorcycle Maintenance by Pirsig, he spends at least one chapter at the beginning talking about how we naturally tend to divide things into smaller pieces in an effort to understand them. The novice looks at a motorcycle and sees the visible things, like a seat, handlebars, and wheels, but the expert sees a fuel system, a cooling system, and the suspension. The same thing or system (motorcycle) can be subdivided different ways depending on what we want to do with it.

My tongue-in-cheek title of this post is an acknowledgement of the many ways we can categorize something like Automation Software, but for my purposes today, I’m making two categories: hammers and levels.

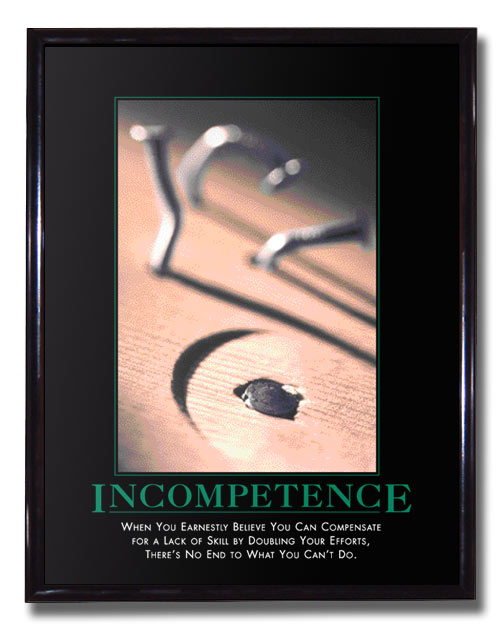

A carpenter carries both a hammer and a level, but the two have fundamentally different failure modes. If a hammer stops working, you’ll know it as soon as you try to use it. As long as it hammers in a nail, it doesn’t matter if the hammer is rusty, dirty, scratched or dented, it’s a working hammer. The level, on the other hand, is a measuring instrument. As novices, we assume that it comes from the factory pre-calibrated, and we happily hang our shelf or picture without testing it, but a professional carpenter knows that they have to check their levels for accuracy, or else the level is useless. You could use a level for years, but if one day it stopped being accurate, you probably wouldn’t know. This is a very different situation than the hammer.

Software in general, and automation software in particular, both have similar examples. You never need to “calibrate” the Axis 1 Advanced proximity switch on a machine because if it doesn’t work, the machine won’t make parts (and you’ll know about it instantly, usually via a 2 am phone call). On the other hand, testing data collection logic is surprisingly difficult because the only way to test it is to compare it with a known-good equivalent. Assuming you created this data collection logic to automate away a manual process, the only measuring stick we can check it against is the manual process we’re replacing. Once the system is bought off and we get rid of the paper system, how do you prove that subsequent changes don’t break the data collection system?

It’s tempting to brush off the problem by saying that anyone who makes a subsequent change has to do a full regression test of the system, including the data collection system, but anyone who has worked in a real factory environment knows that this is unlikely to work in practice. Full regression tests are expensive.

In the greater software world, they use automated unit tests. They take the logic being tested and they run it through a series of automated checks to make sure nothing changes. This works well in an environment like PC programming, but is very difficult in practice for PLC programming because (a) you usually need a physical PLC to execute the logic (unless you have some kind of emulator) and (b) the people maintaining the system are likely not familiar with concepts like unit tests, and are likely to undervalue their importance.

This screams for a system-level solution. Take accounting for instance. Double-entry accounting (the use of debits and credits to force every action to be made twice) is deliberately created to help catch manual entry errors. If your debits and credits don’t balance, you know you’ve made a mistake somewhere, and you go back and check your arithmetic.

In the automation world, the solution is to measure every input to the data collection system two ways, analyze and aggregate both separately, and compare the end results. Create a system warning or fault if the results don’t match. For instance, measure the amount of material going into the machine, and measure the amount of material exiting the machine, both as finished product, and scrap. If the input doesn’t match the sum of the outputs over the same time period, you know you have a problem. The system becomes self-checking (a hammer rather than a level).

If you follow this route, you need to take care to avoid some common traps:

- Don’t re-use logic between the two sides (in fact, try to make them work differently)

- Try to use different sensors or sensing methods (can we measure the input by speed and duration, and the output by parts and scrap weight?)

- Record both, so if there is a discrepancy, you can check them against manual measurements

It sounds like more work, but making the system self-checking actually reduces the amount of testing you have to do, so it’s not as bad as you think. Besides, writing code is a lot more fun than testing it. We automate everyone else’s job, why not the boring parts of ours?

Well said! In a simliar vain, I love fault logic that looks at a scenario from two different perspectives. One checks the other.